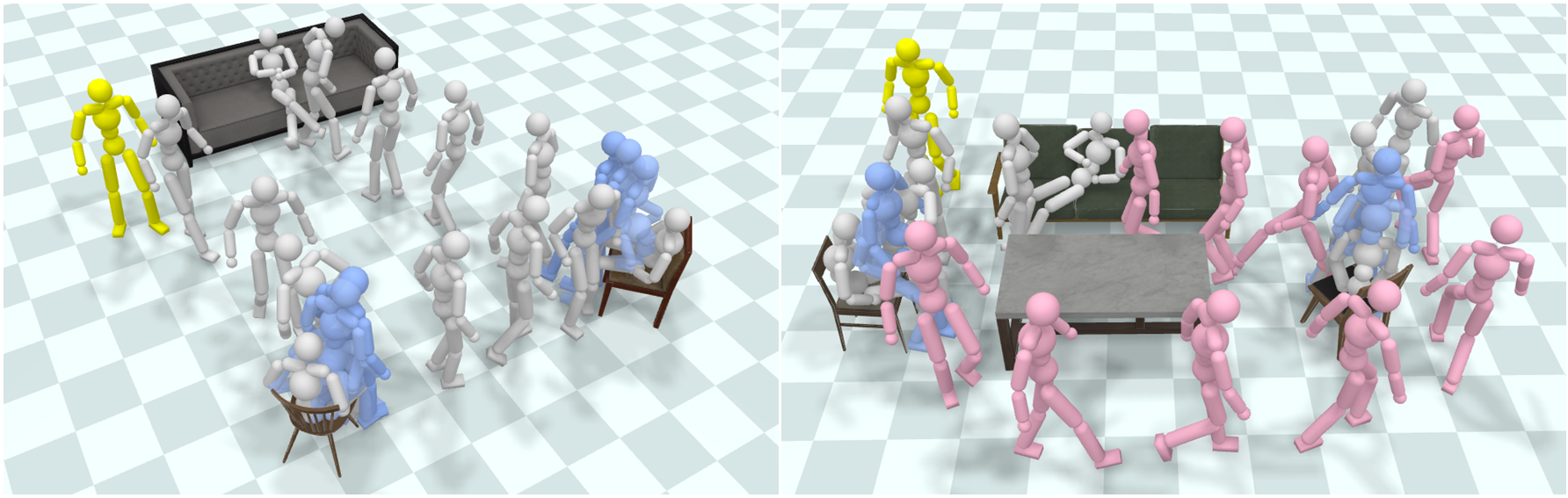

We present a physics-based character control framework for synthesizing human-scene interactions. Recent advances adopt physics simulation to mitigate artifacts produced by data-driven kinematic approaches. However, existing physics-based methods mainly focus on single-object environments, resulting in limited applicability in realistic 3D scenes with multi-objects. To address such challenges, we propose a framework that enables physically simulated characters to perform long-term interaction tasks in diverse, cluttered, and unseen 3D scenes. The key idea is to decouple human-scene interactions into two fundamental processes, Interacting and Navigating, which motivates us to construct two reusable Controllers, namely InterCon and NavCon. Specifically, InterCon uses two complementary policies to enable characters to enter or leave the interacting state with a particular object (e.g., sitting on a chair or getting up). To realize navigation in cluttered environments, we introduce NavCon, where a trajectory following policy enables characters to track pre-planned collision-free paths. Benefiting from the divide and conquer strategy, we can train all policies in simple environments and directly apply them in complex multi-object scenes through coordination from a rule-based scheduler.